tech

March 1, 2026

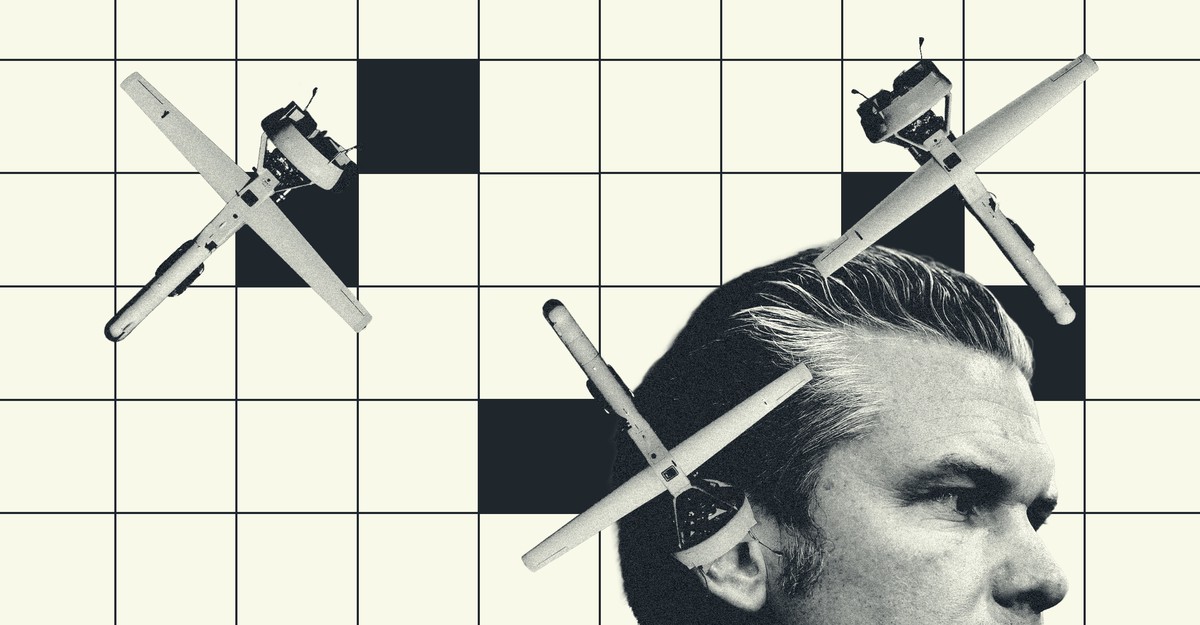

Inside Anthropic’s Killer-Robot Dispute With the Pentagon

New details on precisely where the lines were drawn

TL;DR

- The Pentagon sought to renegotiate its contract with Anthropic, an AI company whose model is used in classified government systems.

- Disagreements centered on removing ethical restrictions Anthropic had placed on its AI, particularly regarding mass domestic surveillance and autonomous killing machines.

- Anthropic agreed to remove some loophole phrases like "as appropriate" but refused to allow their AI to analyze bulk data collected from Americans.

- The company also objected to the Pentagon's desire to use its AI in autonomous weapons, citing concerns about reliability and potential harm to civilians or troops.

- Anthropic argued that the distinction between cloud-based AI and edge systems (like drones) is increasingly blurred, making it difficult to ensure AI is not involved in kill decisions.

- This decision contrasted with OpenAI, whose CEO Sam Altman negotiated a new deal with the Pentagon that reportedly involves using AI only in the cloud, despite some employee concerns.

- Following the breakdown of negotiations, Pete Hegseth directed U.S. military contractors to cease business with Anthropic.

Continue reading the original article