tech

March 10, 2026

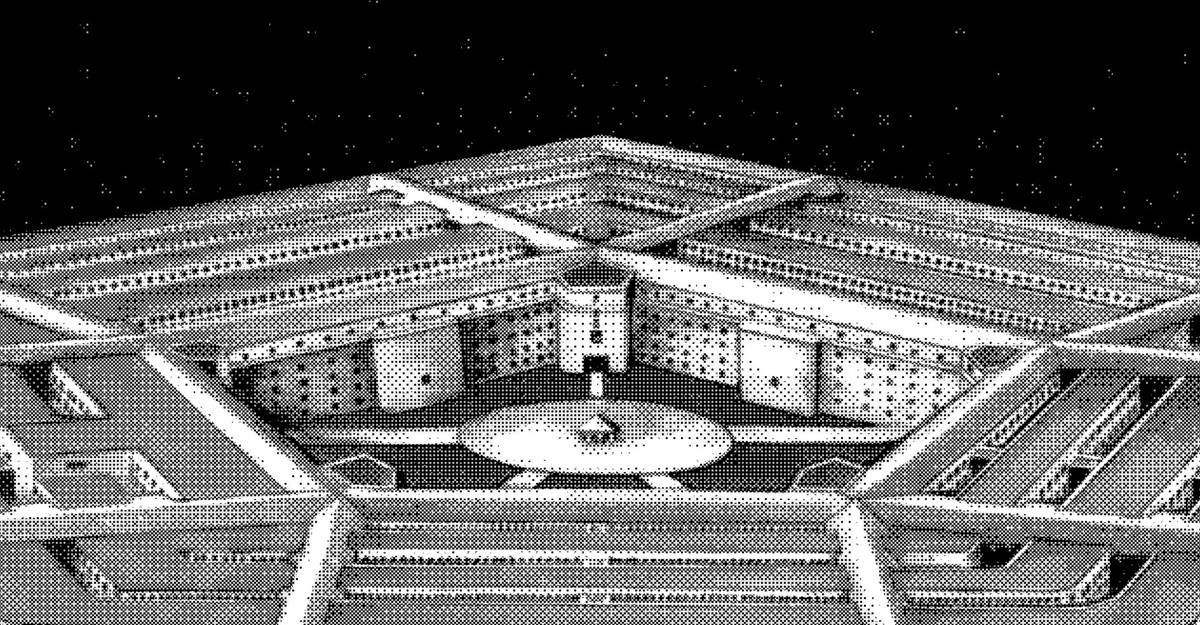

What Anthropic’s Clash With the Pentagon Is Really About

The weekslong conflict between Anthropic and the Department of Defense is entering a new phase. After being designated a supply-chain risk by DOD last week, which effectively forbids Pentagon contractors from using its products, the AI company filed a lawsuit against DOD this morning alleging that the government’s actions were unconstitutional and ideologically motivated. Then, this afternoon, 37 employees from OpenAI and Google DeepMind—including Google’s chief scientist, Jeff Dean—signed an amicus brief in support of Anthropic, in essence lending support to one of their employers’ greatest business rivals (even as OpenAI itself has established a controversial new contract with DOD).

TL;DR

- Anthropic filed a lawsuit against the Department of Defense, calling its designation as a supply-chain risk unconstitutional and ideologically motivated.

- 37 employees from OpenAI and Google DeepMind signed an amicus brief supporting Anthropic, a business rival.

- The conflict stems from disagreements over how the military can use Anthropic's AI systems, particularly regarding mass surveillance and autonomous weapons.

- There is a lack of a legal framework for regulating generative AI and its use in autonomous weaponry or data collection by federal agencies.

- Employees supporting Anthropic expressed concerns about AI's potential for mass surveillance by correlating various data streams.

- Anthropic is not entirely opposed to AI in autonomous weapons but believes current models are not ready for such applications.

- The broader issue is AI technology rapidly advancing beyond established rules, impacting education, copyright, employment, and climate goals.

- Both the Trump administration and Congress are criticized for their slow and potentially controlling responses to AI development.

- AI companies are simultaneously warning about risks and racing to develop more capable models, with a lack of clear accountability for their development and use.

Continue reading the original article